Multi-agent intelligence platform

The quick version

I designed the end-to-end experience for a multi-agent AI platform where specialist agents work together to monitor, analyse, and respond to threats and opportunities in real time. The core design challenge: how do you make a system of autonomous AI agents legible, trustworthy, and controllable for users who don't have time to manage AI, but can't afford to ignore it?

Logically Intelligence already served enterprise and government clients across reputation management, national security, and threat intelligence. The platform was built around the OODA loop — Observe, Orient, Decide, Act — but stopped at Orient. A coordinated team of specialist agents could close that gap. There were no established patterns for this — I designed the information architecture from scratch and prototyped the full experience in Claude Code, a working prototype that stakeholders and users interacted with directly during validation.

My role: Sole product designer, embedded with data science and engineering. Core team: Data science, engineering, OSINT analysts. Tools: Figma, Claude Code (prototyping).

The people using this

I conducted persona research to understand three distinct user types who rely on this platform in fundamentally different ways. All three are under pressure, dealing with fast-moving situations, and need the most important information immediately.

-

The communicator protects a brand but isn't technical. They need the platform to surface what matters, explain why, and recommend what to do. Their focus: Decide and Act.

-

The threat intelligence analyst lives in the data. Trajectory, coordination patterns, actors, provenance. Depth and defensible methodology. Their focus: Observe and Orient.

-

The strategy leader sits between these worlds. Early warning, forward-looking intelligence, decisions. They move across the full OODA loop depending on what's urgent.

These personas drove five design principles that shaped every decision in the project.

Setting up a mission

Before agents can work, the system needs to understand what you're trying to achieve. I designed mission creation as a conversational setup rather than a configuration form — users describe their goal in natural language, and agents ask follow-up questions to refine the scope. This shapes the agent team, monitoring parameters, and success metrics from the start.

Mission creation. Users describe goals, agents handle the setup

Canvas, not dashboard

I explored chat-first, dashboard-heavy, and hybrid layouts before arriving at the canvas model — it lets agents lead with what matters rather than waiting for users to ask. The canvas surfaces only what requires attention right now, with the flexibility to add, remove, or resize any widget on demand. Chat is still part of the experience: by default you're talking to the Squad Lead, but you can switch to any specialist.

The metaphor that guided my design: your agents are expert colleagues, and you're the manager. You need them to brief you on what matters, flag when something needs your approval, and execute when you give the go-ahead.

Canvas chosen over chat-first and dashboard-heavy layouts — agents lead with what matters

Layout exploration

The event analyzer

Built by the data science team, this is the system's front line: the agent that detects, classifies, and scores both risks and opportunities across monitored channels. Working closely with data scientists, I designed the user-facing flow through the event lifecycle and connected it to goal creation. The linear path exists as guidance, not a constraint: users can trigger any step at any point.

Event lifecycle flow

Once a goal is created, the Event Analyzer continues monitoring, tracks whether the goal is on track, and surfaces new recommendations if the situation shifts. What users see is simple: ranked risks and opportunities, each with AI insights and recommended actions.

Ranked risks and opportunities

Simulation: answering "what happens next?"

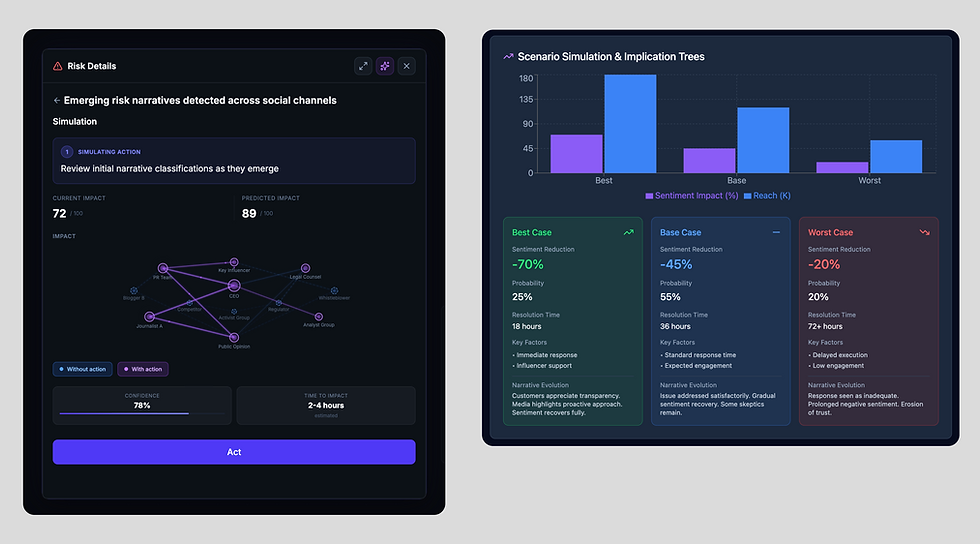

Simulation is one of the most critical parts of the experience. The key design decision: show both scenarios side by side. Not just "what happens if I act?" but equally "what happens if I do nothing?" That comparison is what turns a recommendation into an informed decision.

Simulation view. Exploring how to communicate what happens next — from stakeholder network to scenario comparison

This is also where explainability becomes tangible — the view serves all three personas at different depths without forcing anyone into the wrong level of detail.

After an action is approved, the Evolution view closes the loop — tracking predicted versus actual outcomes and triggering reassessment if the goal goes off track. Resolved events generate postmortem reports that feed back into the knowledge base, so the system improves with use.

Evolution view. Tracking goal progress over time, actual vs predicted

Asking agents about deviations and getting actionable recommendations

Building trust

No matter how good the recommendations are, users won't act on them if they don't trust the system.

-

Approval control. A threshold-based model configurable per agent, per risk level: low-priority actions execute autonomously; critical events halt until a human decides. Stakeholder feedback confirmed the risk of biased assessments if agents run unchecked — the approval model was designed to address this directly.

-

No black box. A direct requirement from analyst research: agents must show their work. Every recommendation includes reasoning, confidence levels, and links to sources. The Agent Activity feed gives a real-time log of every action across the team — full transparency.

-

Proof, not just claims. Every AI insight is backed by evidence users can inspect. Sources are linked, scores are broken down, and every prediction includes a "why." Agents don't just tell you what to think; they show you what they found.

Managing a team of agents. From glanceable activity to deep configuration

Managing a team of agents

The core design challenge: how do you give users visibility into a team of agents working simultaneously without overwhelming them? Too little and it feels like a black box. Too much and it becomes noise.

The answer was progressive disclosure across three levels, each mapped to a different user need:

-

Glanceable activity feed for users who just need confidence that nothing has gone wrong.

-

OODA task board for those who want to see exactly where each task sits in the intelligence cycle — which agent owns it, and what stage it's at.

-

Individual agent profiles for deep configuration: skills, tools, approval thresholds, privacy controls. Strategic setup, not daily use.

Agent Tasks OODA board. Observe → Orient → Decide → Act. Real-time log of every action across the agentic team

Learnings and outcomes

Key learnings

-

Language carries more weight than you expect. Calling agents "analysts" signalled replacement. Renaming them reinforced augmentation over substitution.

-

Designing for multi-agent AI is designing team dynamics. The closest parallel isn't traditional UX — it's organisational design: visibility, escalation, and incremental trust.

-

Not every agent delivers instant results. The Simulation Agent's latency broke the fluid experience — designing meaningful intermediate states mattered more than speed.

-

Designing in a space being invented in real time. Interaction patterns evolved day by day; decisions had to be revisited constantly.

Outcomes

Five demo sessions with users and stakeholders confirmed the direction.

-

The canvas as proactive briefing resonated immediately. Users had been frustrated by chat-only AI tools that required knowing what to ask — the canvas solved this by leading with what matters rather than waiting.

-

The mental model was intuitive enough that users asked how to create their own agents — a signal they weren't just consuming the experience, they were imagining extending it.

-

Users wanted more control over prioritisation — not just seeing what agents flag, but telling the system what matters most in their context. This validated the configurability built into agent settings and approval thresholds.